Automated Data-Persistence in kdb

Terry Lynch presented a a system for allowing end users to upload their own data to kdb for storage in a safe and reliable manner. The below is a quick outline of his talk and a link to his powerpoint. I found it extremely interesting as I've seen the need for something like this a few places but never actually seen such a good solution built.

Background Information: The Schonfeld Environment

- A recently SEC-registered investment adviser (and once privately-held) trading and investment firm operating since 1988 under Steven Schonfeld

- Invests its capital with portfolio managers engaging in a variety of strategies including quant stat-arb, fundamental equity/relative value and tactical

- Adopted kdb+ in 2008 as part of a technological overhaul of ageing systems

- 40+ trading groups, many using kdb either in a direct or hosted capacity

- 50+ different datasets across all asset classes, all vendors, with deep history

- Multiple high-throughput tickerplants covering level1, level2 and newswires

- Almost 1 petabyte of data in kdb format and growing continuously

- Emphasis on using kdb as a driver of a shared research environment

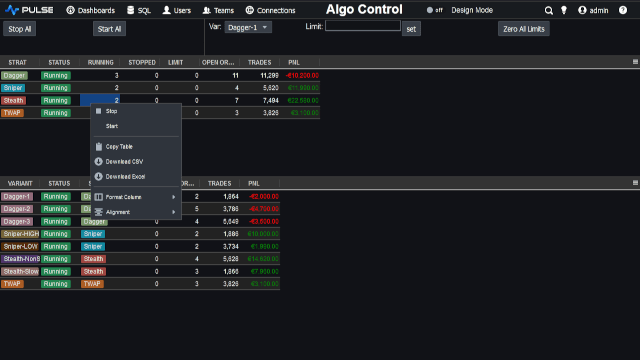

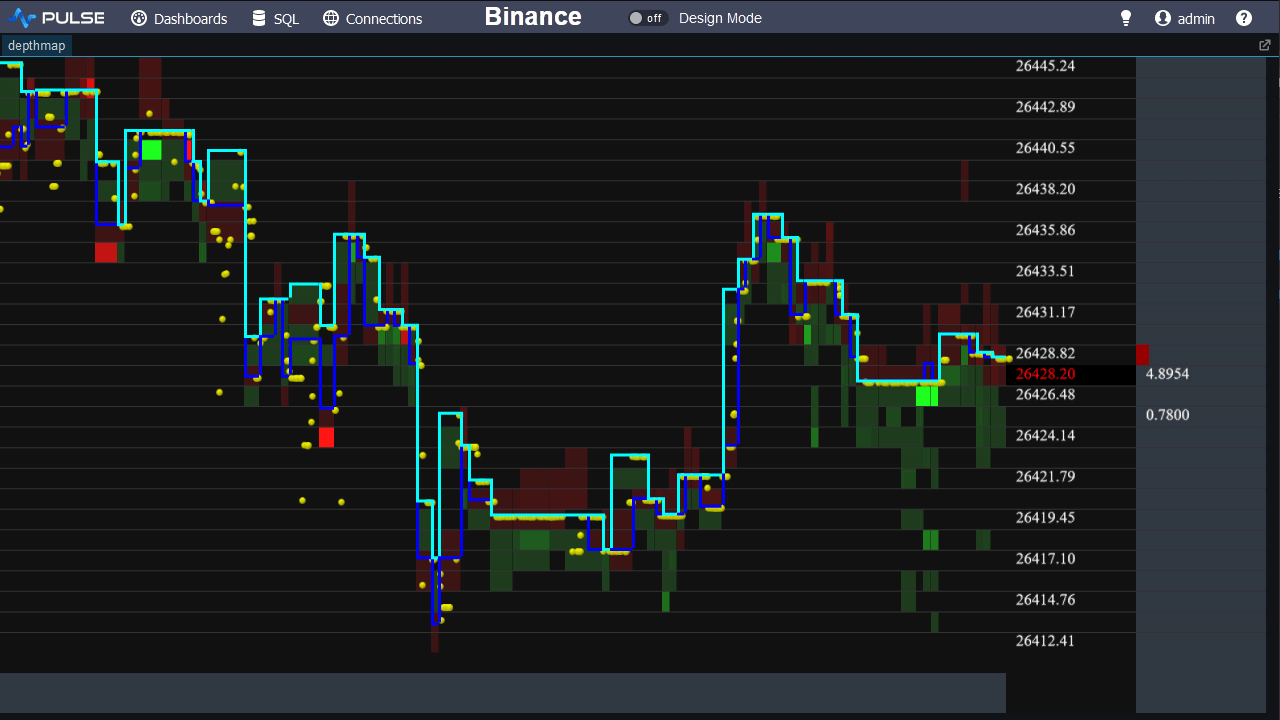

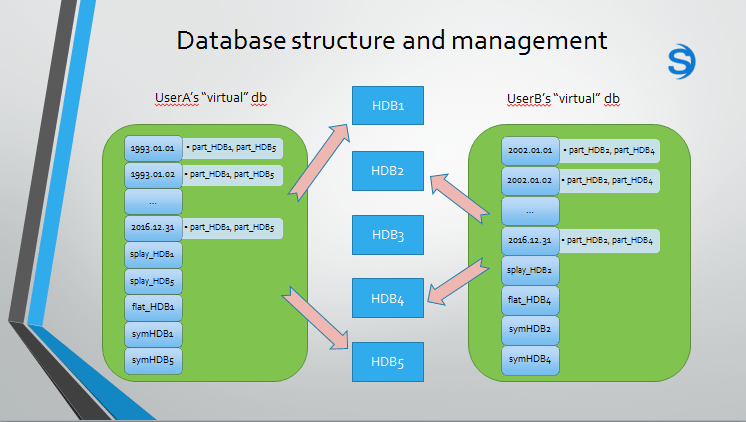

Typical Database structure and management

The next challenge...

- Given this environment, and given that each user has unique data requirements, proprietary code and closely-held trade strategies, three challenges arise...

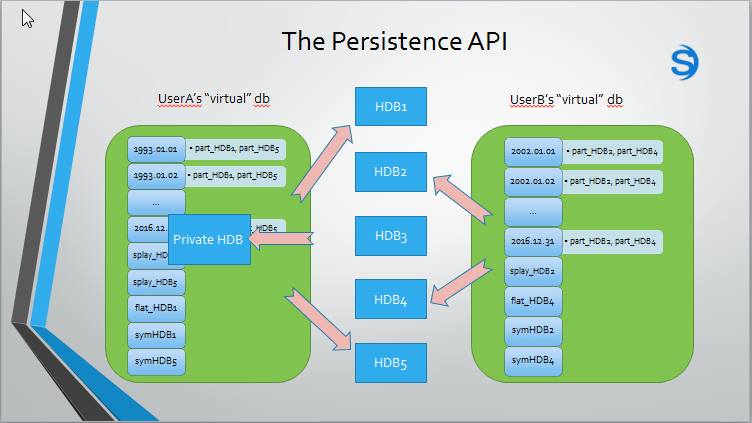

- How can a user persist their own (derived) data to this "virtual" db and do so in a manner which is optimal, safe, private and instantaneously visible in their vdb

- How can a user automate/schedule such derived datasets without oversight

- How can a user perform quality control tests to maintain integrity of this private data

- This results in the need for APIs/tools which can achieve the above by:

- Giving the user a certain amount of control but not too much control

- Performing various checks/optimisations under the covers transparently to the user

- Alerting the users of any data discrepancies based on custom pre-defined criteria

Persistence Api

The Solution...

Conclusion

- In summary, we have created three useful tools to enable non-expert kdb users to gain better use and more efficiency from the platform

- The persistence API, scheduler and quality control framework combine to form a private, safe, unified and controllable environment for our users

- It also helps to alleviate some burden from our in-house kdb development team by offloading data creation and maintenance to the users themselves

- This can also reduce the time it takes to set up new datasets in a production environment as the users do not need to rely/wait on our in-house team

- It allows users to maintain a level of secrecy by having direct and protected access to their proprietary q code, trading models and derived datasets

This presentation was by Terry Lynch