New SQL Docs

We just launched a new sql documentation website: sqldock.com

to allow integration with Pulse / qStudio and docs more easily.

More updates on this integration will be announced shortly. 🙂

We just launched a new sql documentation website: sqldock.com

to allow integration with Pulse / qStudio and docs more easily.

More updates on this integration will be announced shortly. 🙂

We have been working on version 2.0 of Pulse with a select group of advance users for weeks now. To give you a preview of one new feature, check out markers shown on the chart below. We have marker points, lines and areas.For example this will allow adding a news event to a line showing a stock price. This together with many other changes should be released soon as part of 2.0.

Pulse is specialized for real-time interactive data, as such it needs to be fast, very fast. When we first started building Pulse, we benchmarked all the grid components we could find and found that slick grid was just awesome, 60East did a fantastic writeup on how Slick grid compares to others. As we have added more features, e.g. column formatting, row formatting, sparklines…..it’s important to constantly monitor and test performance. We have:

Today I wanted to highlight how our throughput tests work by looking at our grid component.

To test throughput we:

Video Demonstrating 21,781 rows being replayed as 435 snapshots taking 16 seconds = 27 Updates per second. (European TV updates at 25 FPS).

Update: After this video we continued making improvements and with a few days more work got to 40 FPS.

We then examine in detail where time is being spent. For example we:

Then we try to improve it!

Often this is looking at micro optimizations such as reducing the number of objects created. For example the analysis of how to format columns is only performed when columns change not when data is updated with the same schema. The really large wins tend to be optimizing for specific scenarios, e.g. a lot of our data is timestamped and received mostly in order. But those optimization are for a later post.

We just announced a unique event that gathers 4 of the newest, most advanced databases for Finance into 1 hour:

If you work on big data in Finance, this is your chance to get an overview of the rapidly changing database landscape. TimeStored will be organizing a free online presentation, each database company will present 10 minutes on what is unique to their solution. Bringing together the top new technologies together in one place.

If you work with data, at some point you will be presented with a powerpoint similar to this:

A wonderful fictional land, where we cleanly build everything on the layer below until we reach the heavens (In the past this was wisdom or visualization, increasingly it’s mythical AI).

There are two essential things missing from this:

Therefore the diagram should look more like this:

You start with data, you reach Action but at any stage, including after action you can loop back to earlier stages in the cycle.

I’ve purposely blurred out the steps because it doesn’t matter what’s inbetween. Inbetween should be whatever gets your team to the action quickest with the acceptable level of risk. Notice this is the SDLC software development lifecycle. Software people spent years learning this lesson and it’s still an ongoing effort to make it a proper science.

What do you think? Am I wrong?

We want to be the best finance streaming visualization solution. To achieve that, we can’t just use off the shelf parts, we have built our own market data order book visualization component from scratch, it’s only dependency is webgl. We call it DepthMap. It plots price levels over time, with the shading being the amount of liquidity at that level. It’s experimental right now but we are already receiving a lot of great feedback and ideas.

Faster Streaming Data

A lot of our users were capturing crypto data to a database, then polling that database. We want to remove that step so Pulse is faster and simpler. The first step is releasing our Binance Streaming Connection. In addition to our existing kdb streaming connection, we are trialling Websockets and Kafka. If this is something that interests you , please get in touch.

Our latest product Pulse is for displaying real-time interactive data direct from any database. To get most benefit, the underlying databases need to be fast (<200ms queries). For our purposes databases fall into 2 categories:

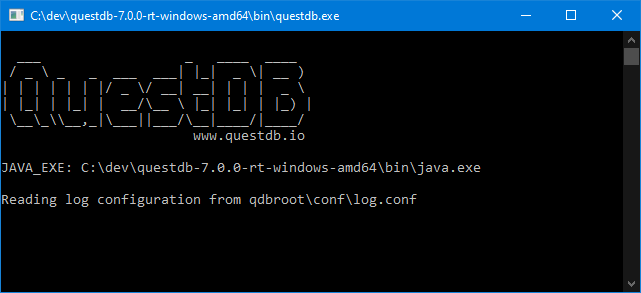

It’s very exciting when we find a new database that meets that speed requirement. I went to the website, downloaded QuestDB and ran it. Coming from kdb+ imagine my excitement at seeing this UI:

Good News:

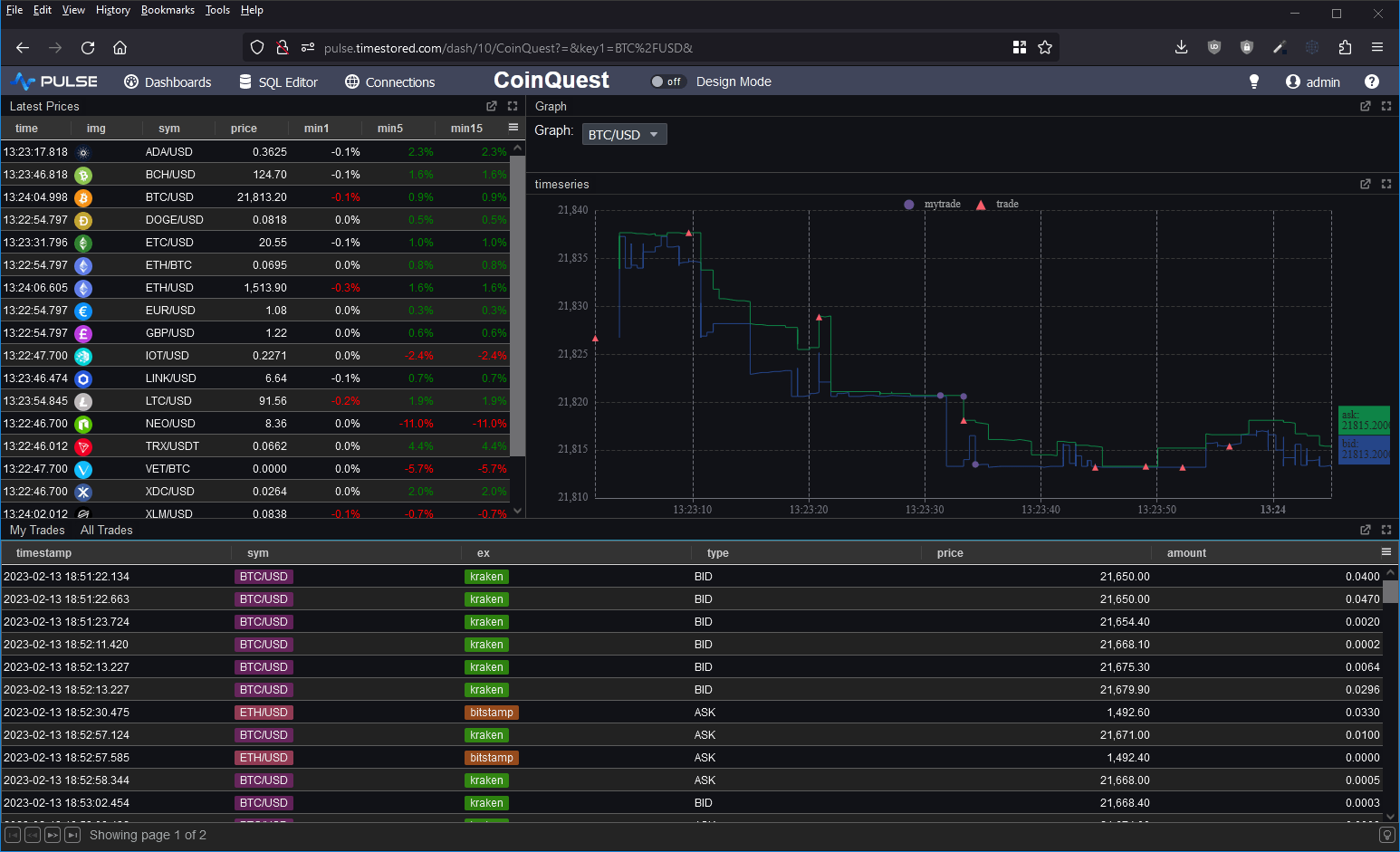

I wanted to take it for a spin and to test the full ingestion->store->query cycle. So I decided to prototype a crypto dashboard. Consume data from various exchanges and produce a dashboard of latest prices, trades and a nice bid/ask graph as shown below.

Within a very short time, I managed to get the database populated and the dashboard live running. This is the first in a long time that a database has gotten me excited. It seems these guys are trying to solve the same user problems and ideas that I’ve seen everywhere. There were however some significant feature gaps.

`time xasc (uj/)(table1;table2)pattern for combining multiple tables into one. For the graph I had to use a lengthy SQL UNION.

In general kdb+ has array types and amazingly lets you use all the same functions that work on columns on nested structures. I missed that power.

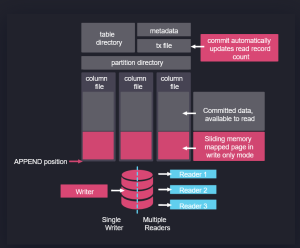

In fact, if you look at their architecture on the right, it’s obvious some of their team have used kdb+. Data is partitioned on date, with a separate folder per table and a column per file. Data is mapped in when read and appended when new data arrives.

In some ways this architecture predates kdb+ and originates from APL. It’s good to see new entrants like QuestDB and apache arrow pick up these ideas, make them their own and take them to new heights. I think kdb+ and q are excellent, I was always frustrated that it has remained niche while inferior technical solutions became massively popular, if QuestDB can take time-series databases and good technical ideas to new audiences, I wish them the best of luck!

Please leave any of your thoughts or comments below as I would love to hear what others think.

If you want to see how to setup QuestDB and a crypto dashboard yourself, we have a video tutorial:

Support for 30+ databases has now been added to both qStudio and Pulse.

Clickhouse, Redis, MongoDB, Timescale, DuckDB, TDEngine and the full list shown below are all now supported.

Pulse is being used successfully to deliver data apps including TCA, algo controls, trade blotters and various other financial analytics. Our users wanted to see all of their data in one place without the cost of duplication. Today we released support for 30+ databases.

“My market data travels over ZeroMQ, is cached in Reddit and stored into QuestDB. While static security data is in SQL server. With this change to Pulse I can view all my data easily in one place.” – Mark – Platform Lead at Crypto Algo Trading Firm.

In particular we have worked closely with chosen vendors to ensure compatibility.

A number of vendors have tested the system and documented setup on their own websites:

Over the last few months, I’ve discussed grid components, aggregating and pivoting with a lot of people. You would not believe how much users want to see a good grid component that allows drill down and how strongly they hold opinions on certain solutions. I have examined a lot of existing solutions, everything from excel, to powerBI, Oracle, DuckDB, hypertree, grafana, tableau……. I think I’m beginning to converge these ideas and requests into a pivot table that will be a good solution for our users:

Well now the proposed interface looks like this:

A lot of the functionality inspiration should be credited to Stevan Apter and HyperTree. Ryan had seen HyperTree and loved the functionality and beautiful kdb only implementation. The challenge was to allow similar functionality for all databases while making it more accessible. We now have a working demo version.

If you love pivot tables and have never got to see your dream grid component come to fruition, we want to build it, so get in touch.

I won’t go through the full list of great presentations as Gary Davies has that excellently covered but I will highlight some trends I saw at kxcon 2023:

Alex Donohue’s presentation was packed with years of condensed knowledge , including the excellent diagram below showing typical user expertise. Notice as it transitions from backend kdb developers to frontend business users:

Looking at it this way, makes it clear we need to provide APIs as users want to express queries in their own language.